NEWS Hannah Devinney, doctoral student in the Department of Computing Science and the Umeå Centre for Gender Studies, Umeå University, has studied how social biases about sex and gender, including trans, non-binary and queer identities, are reflected in language technologies. On 18 April 2024, they will defend their doctoral thesis entitled Gender and representation: Investigations of Social Bias in Natural Language Processing.

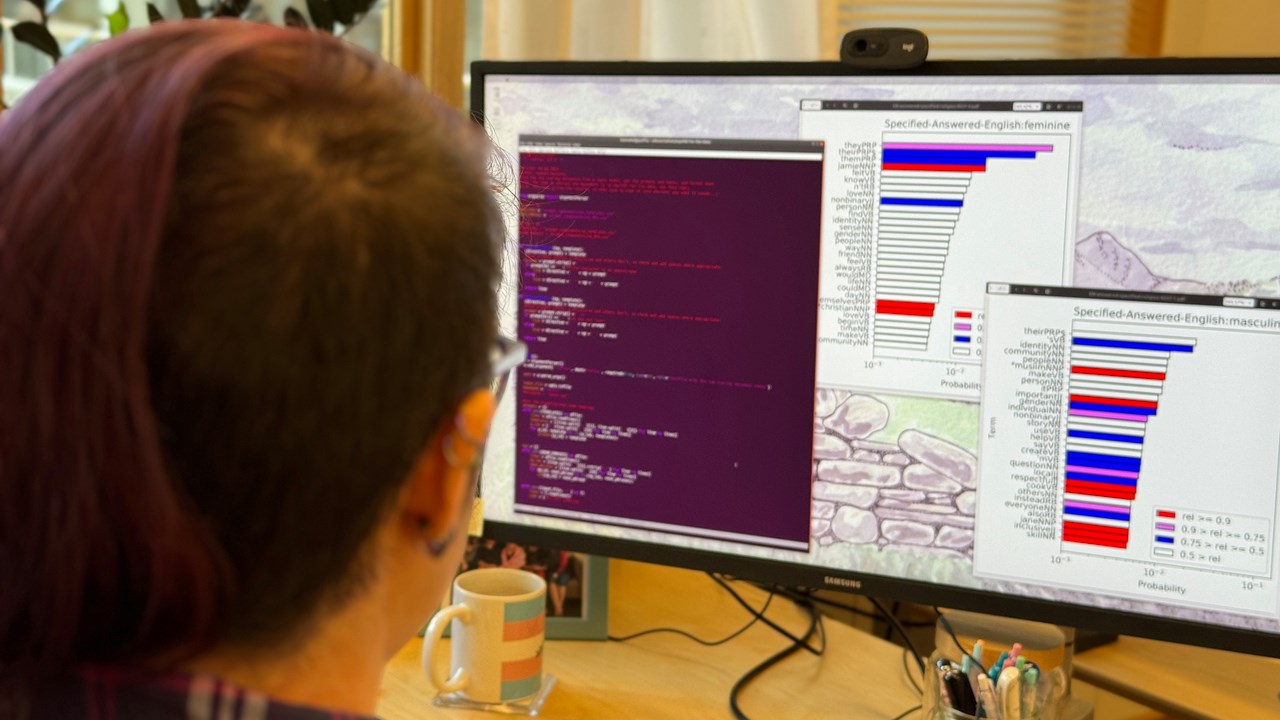

Hannah Devinney, doctoral student, Depart. of Computing Science and Umeå Centre of gender studies.

ImageHenrik BjelkstålWe interact with technologies that process human language (Natural Language Processing, NLP) every day, whether in forms we see (auto-correct, translation services, search results for example on Google) or those we don’t (social media algorithms, “suggested reading” on news sites,spam filters). NLP also fuels other tools under the Artificial Intelligence (AI) umbrella – such as sorting CVs or approving loan applications – decisions that can have major impact on our lives. As ChatGPT and other large language models have become popular, we are also increasingly exposed to machine-generated texts.

Machine Learning methods, which are behind many modern NLP tools, replicate patterns in data, such as texts generated by humans. However, these patterns are often undesirable, comprising stereotypes and other reflections of human prejudice. These prejustices are present both implicitly and explicitly in the language developers “show” computers when training these systems. The scientific community calls these patterns “bias” when they appear in computer systems.

Hannah Devinney's research focuses on such patterns as they relate to gender, particularly but not only on trans and nonbinary notions of gender, as this group has often been left out of the conversation around “gender bias.” Hannah is trying to understand how the different ways people are treated by language technologies are affected by gender, and how this relates to complicated systems of social power that are often reflected in and maintained by language.

Hannah is trying to understand the problem from three angles: how “gender” is thought about and defined in the research field investigating NLP biases; how “bias” manifests in datasets used to train language technologies; and how gender appears in the output of some of these technologies. They are also trying to find ways to improve representation in order to counter some of these biases.

Datasets with trillions of words

When Hannah looks at language data in the form of texts such as news articles or other stories, they use “mixed methods” approaches, which means they combine quantitative and qualitative methods. This is necessary because the datasets used to train modern NLP tools are really, really big – too big to analyze qualitatively.

"The data sets are billions or trillions of words in size, which means even a very fast reader would take hundreds of years of doing nothing else except sleeping to get through all of the texts. But at the same time, 'bias' is a really complicated phenomenon, so if we just look at it numerically we likely won’t really understand what we’re seeing and its consequences," says Hannah.

One example of mixed methods that they have developed with their research colleagues is the EQUIBL method (“Explore, Query, and Understand Implicit Bias in Language data”). The method uses a computational method called Topic Modeling as a sort of filter to identify patterns of association between words in the texts.

"We have chosen the parts that we think are important for understanding how gender is represented in those texts, and then we qualitatively analyze a much smaller amount of information to see how bias is actually happening, rather than just identifying its presence," they say.

The main themes of their findings are that research about language technologies, language data, and language technologies themselves, are very cisnormative (meaning the systems assume everyone’s gender matches the sex that they were assigned at birth) and often are very stereotypical and limiting.

In language data, women are more associated with home, family, and relational conceptslike ‘communication’ than men, who are often treated as the ‘default’ person. Trans and nonbinary people are often erased during the process (recent research in the NLP field leaves the non-binary persons out, they are not present in a lot of the training data, and many tools can’t even recognize or consistently use “newer” nonbinary pronouns like hen in Swedish) and when non-binary persons do appear it is often in stereotypical narratives.

Another important finding is that bias is complicated and culturally dependent– for example Hannah found that some patterns of “bias” differ in English vs Swedish news data – so countering this kind of bias is difficult because culture, and what we researchers consider “not biased,” are always changing, according to Hannah.

That can feel overwhelming, but at the same time I think it’s a really cool opportunity to really consider whatkinds of linguistic worlds we want to build and inhabit.

One of the big challenges was a lack of data that includes non-binary and trans identities. "A key reason that many NLP tools erase non-binary people is that the group is not well-represented in the kinds of language data used to train the systems, so this challenge is in a sense itself one of the things I study," says Hannah Devinney.

Throughout the different case studies in the thesis, they have both “augmented” existing datasets by changing sentences to use nonbinary pronouns (“han är student” to “hen är student”) and collected new datasets by taking web content from queer news sites and discussion forums.

Right now Hannah thinks “bias” and “AI” are being talked about a lot, especially as technology companies try to sell large language models as “AI” or “thinking” rather than tools which just repeat statistically likely strings of words.

I think people are right to be worried about fairness and the risks associated with these technologies

The risks and unfairness aren’t new – language technologies have been around for a long time – but the scale, attention, and money associated with them is extremely high right now, so the average person is a bit more aware of their existence.

"My work complicates how we as researchers and computer scientists understand ‘bias’ (that’s good, because bias is a social phenomenon, and those are by nature usually complicated!) so that we can grasp some of the nuances of howlanguage, technology, and society work together to make inequalities, as well as hopefully work towards countering them," they say.

Hannah Devinney will start as a postdoc with Tema Genus at Linköping University in August, as part of Katherine Harrison’s WASP-HS project “Operationalising ethics for AI: translation, implementation and accountability challenges.”

Their part of the project will be about queer, trans, and non-binary representation in synthetic data.